How a joint UM6P–EPFL researcher is tackling one of medicine’s quietest risks

Artificial intelligence has become increasingly adept at answering medical questions. At UM6P, a joint UM6P–EPFL PhD researcher is focused on a more difficult task: teaching algorithms when not to answer at all.

Aurel Davy Tchokponhoue is a PhD student at the Faculty of Medical Sciences under joint supervision between University Mohammed VI Polytechnic and the École Polytechnique Fédérale de Lausanne (EPFL) in Switzerland, as part of the Excellence in Africa – 100 PhDs for Africa program. Artificial intelligence has become adept at answering questions. In medicine, however, the more consequential task may be knowing when not to answer at all.That problem sits at the center of the work of Aurel Davy Tchokponhoue, a PhD researcher jointly supervised by UM6P and École Polytechnique Fédérale de Lausanne (EPFL) under the Excellence in Africa – 100 PhDs for Africa program.

His research focuses on breast cancer diagnosis, not from images or scans, but from gene expression data, the molecular signatures that determine how a tumor behaves and how it should be treated.

“The issue isn’t that AI gets everything wrong,” Tchokponhoue says.“It’s that it can be very confident when it shouldn’t be.”

That confidence, when misplaced, is not a technical flaw. In a clinical setting, it is a fatal risk.

The danger of certainty in an uncertain setting

Breast cancer is not a single disease. Its molecular subtypes — Luminal A, Luminal B, HER2-enriched, Basal-like — respond differently to treatment, carry different prognoses, and demand different therapeutic strategies. Identifying the subtype correctly is essential.Deep learning models have shown impressive accuracy in classifying these subtypes from transcriptomic data. Yet most of them share a problematic trait: they always produce an answer.

“In real hospitals, data is messy,” Tchokponhoue explains. “Different labs, different sequencing protocols, different patient populations. Models are trained on one reality and deployed in another.”

When confronted with unfamiliar data, many AI systems do not hesitate. They extrapolate, and often do so confidently.

“For a clinician, that’s the worst case,” he says. “A wrong answer that looks certain.”

Why uncertainty matters more than accuracy

Tchokponhoue’s work, carried out at UM6P’s Faculty of Medical Sciences in collaboration with EPFL, does not attempt to outperform existing models on raw classification accuracy. Instead, it asks a more foundational question: how reliable is a prediction, and how can that reliability be measured?

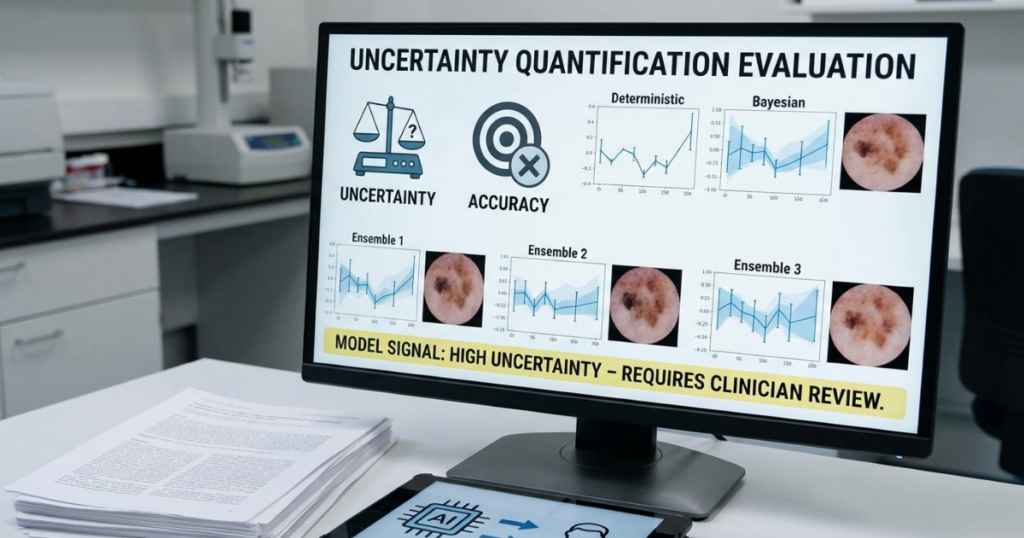

The study systematically evaluates five families of uncertainty-quantification methods — deterministic models, Bayesian approaches, and ensemble techniques — to assess not just how often predictions are correct, but how well models express doubt when they should.

“The goal is not to replace doctors,” he says. “It’s to give them a signal. A way to distinguish between predictions that are safe to trust and those that need a second look.”

In practical terms, this allows an AI system to abstain. When uncertainty is high, the model can flag a case for human review rather than forcing a decision.

“That changes the relationship between AI and clinicians,” Tchokponhoue notes. “It turns the model into an assistant, not an authority.”

Teaching models to recognize the unfamiliar

A central challenge in uncertainty research is evaluation. Real-world out-of-distribution cases — samples that differ meaningfully from training data — are rare, difficult to label, and ethically sensitive in medicine.

To address this, Tchokponhoue developed GMGS1 and GMGS2, two algorithms designed to generate synthetic but biologically plausible out-of-distribution gene expression data.

These synthetic samples allow researchers to test whether models can detect unfamiliar patterns rather than misclassifying them with confidence.

“We’re not trying to trick the model,” he explains. “We’re trying to expose it to situations it hasn’t seen, but could realistically encounter.”

The approach draws on generative modeling to simulate distributional shifts that mirror what happens when data comes from new populations, new technologies, or emerging clinical contexts.

No single solution, and that is the point

One of the study’s more restrained conclusions is also its most important: no single uncertainty method dominates across all dimensions.

Ensemble models tend to be cautious and sensitive to ambiguity. Bayesian approaches show stronger robustness under adversarial or shifted conditions.

“That’s not a weakness,” Tchokponhoue argues. “It’s a signal that hybrid systems may be the future.”

Rather than seeking a universal solution, the research points toward combining complementary approaches — systems that are accurate, cautious, and resilient at once.

In a clinical environment, that balance matters more than theoretical elegance.

The role of the UM6P–EPFL partnership

The structure of Tchokponhoue’s doctoral program is not incidental to the work. The joint supervision between UM6P and EPFL places the research at the intersection of applied medical need and methodological rigor.

“At UM6P, the focus is on impact and relevance,” he says. “At EPFL, the emphasis is on methodological soundness and theoretical depth. Together, they shape how the problem is framed.”

The Excellence in Africa – 100 PhDs for Africa initiative, he adds, is particularly significant in a domain where global inequities are stark.

“In many African contexts, late diagnosis and lack of specialists are structural problems,” Tchokponhoue says. “AI will not solve that alone. But if designed responsibly, it can help reduce the gap.”

For patients, the implications are indirect but tangible. A system that can signal uncertainty reduces the risk of inappropriate treatment, unnecessary side effects, and premature clinical decisions.

“It’s about protecting patients from overconfidence,” he says. “Not just from AI, but from any system that hides its limits.”

For clinicians, it offers something equally valuable: transparency.

“When a model tells you it’s unsure,” Tchokponhoue adds, “you’re still in control.”

Want to dive more ?

Read the full article here.

Leave a Reply